Echo

Overview

During the summer of 2025, I was selected as one of 30 students for the six-week Apple Maker Academy, a progression of the Apple Engineering Camp, where I furthered my skills in manufacturing, product design, and electrical engineering. Applying my experiences from past projects, Flick, and FTC, I led the development of Echo alongside five exceptional team members. At the end of these six weeks, we pitched our product in a ten-minute keynote presentation to a board of Apple Executives, which included a live demo of the product.

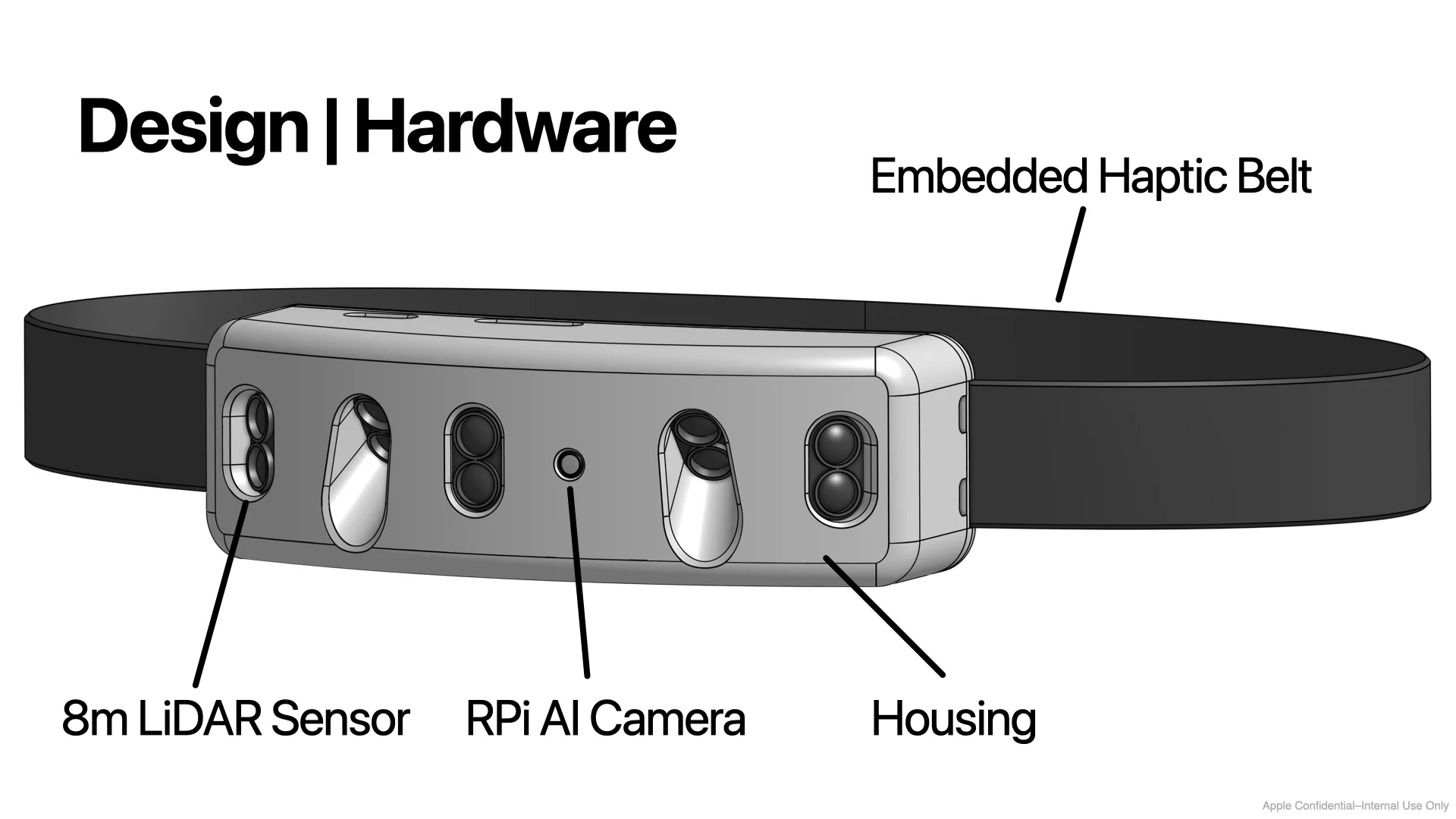

Echo is a wearable navigation device designed to help vision-impaired users safely navigate their environment by using LiDAR sensors and a Raspberry Pi AI camera for real-time object detection and delivering multi-modal feedback via haptics, Spatial Audio, and Voiceover.

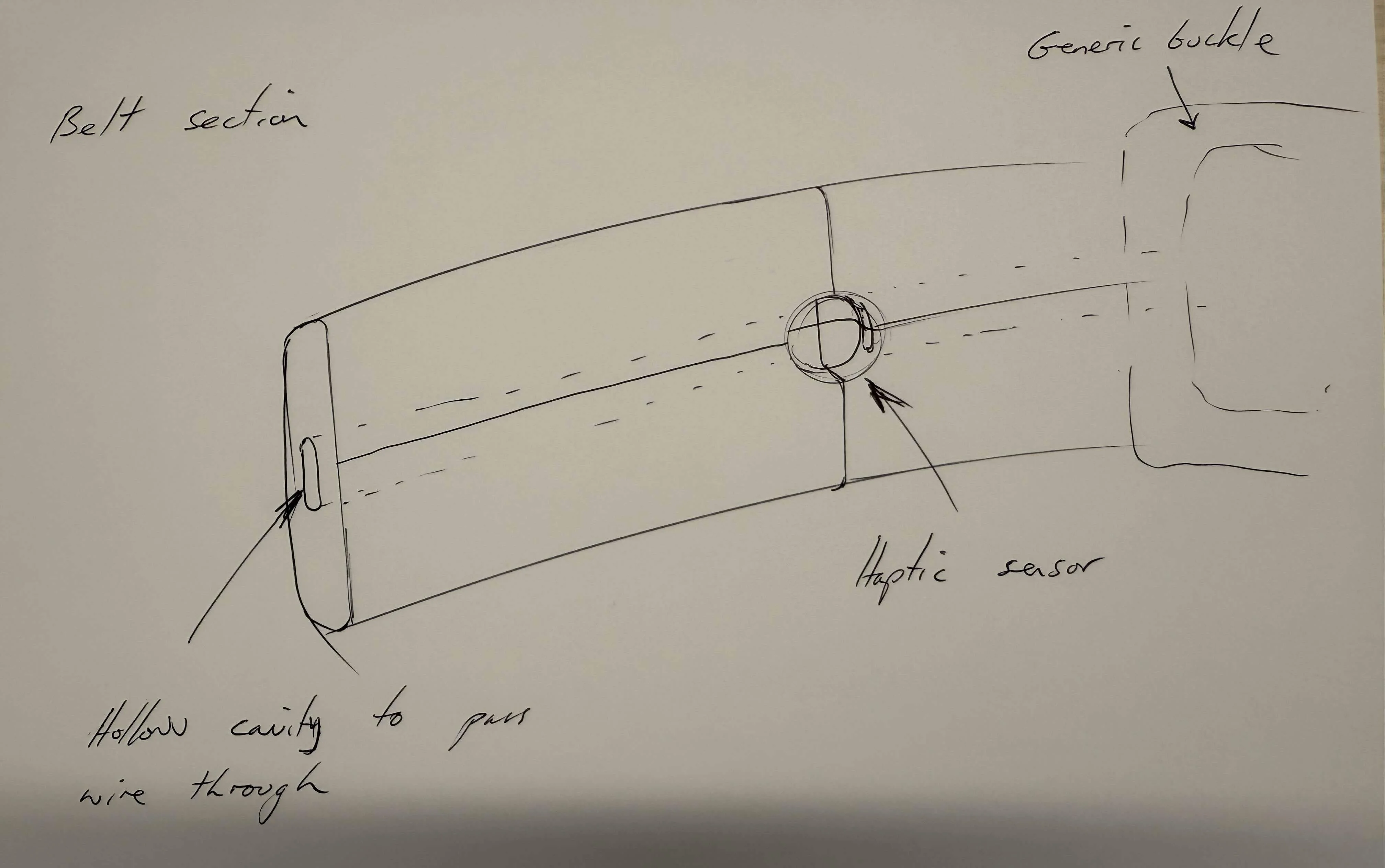

We wanted to make Echo as unobtrusive as possible, yet still have it serve as a clear symbol for those who are visually-impaired, similar to the white cane. After brainstorming through different form factors and placements on the human body, we settled on a compact waist belt as the ideal solution.

Render of Echo

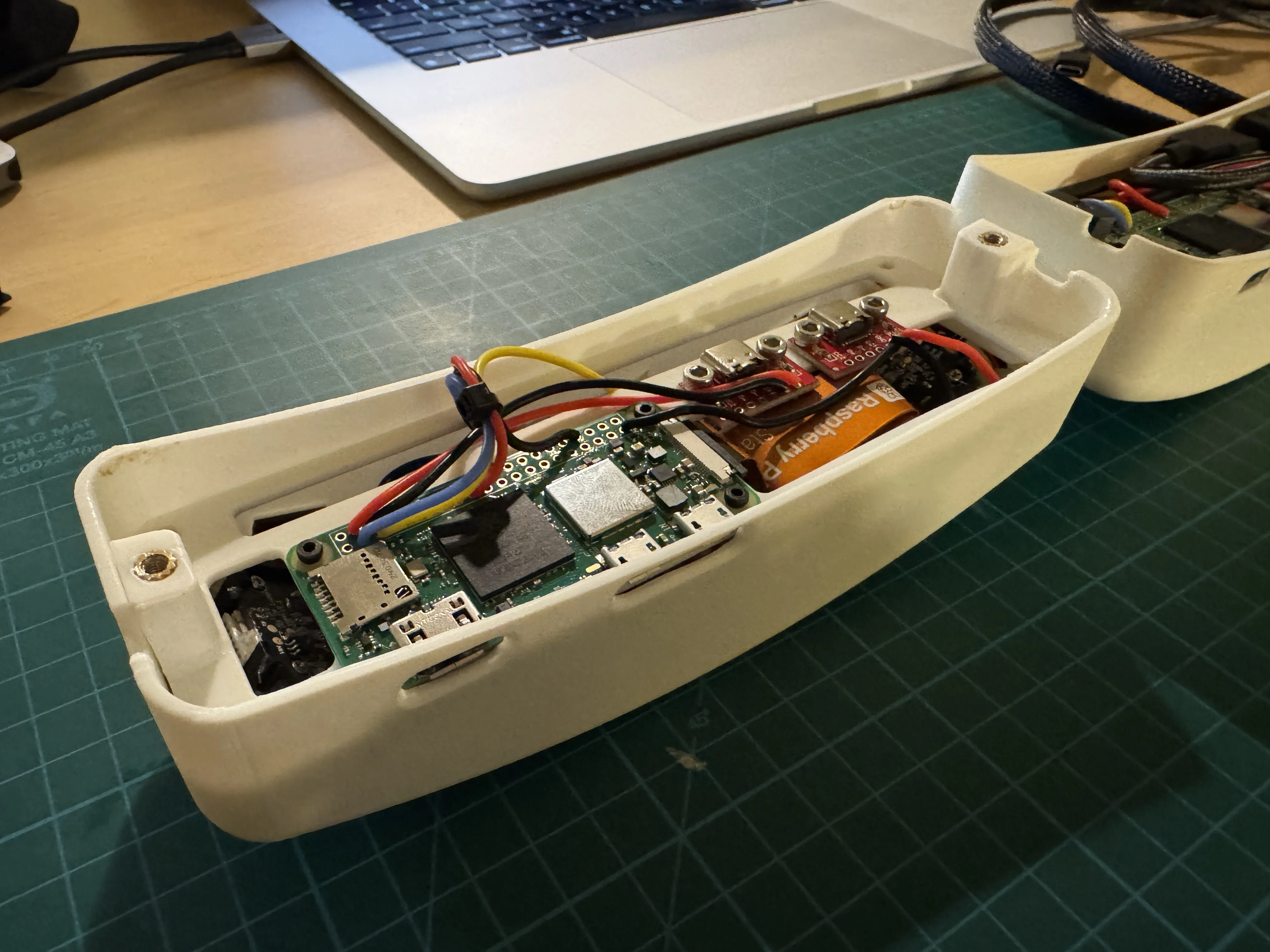

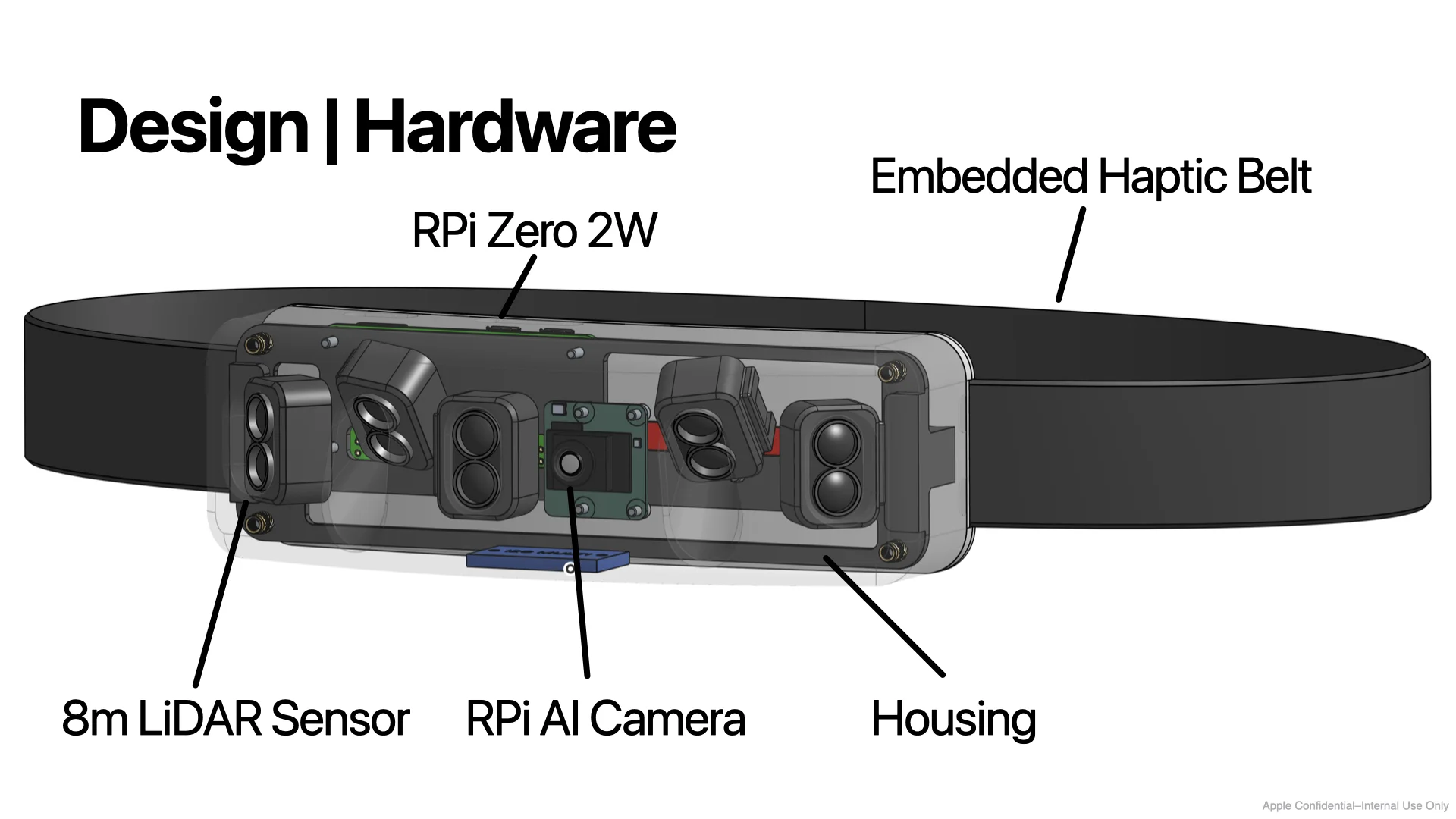

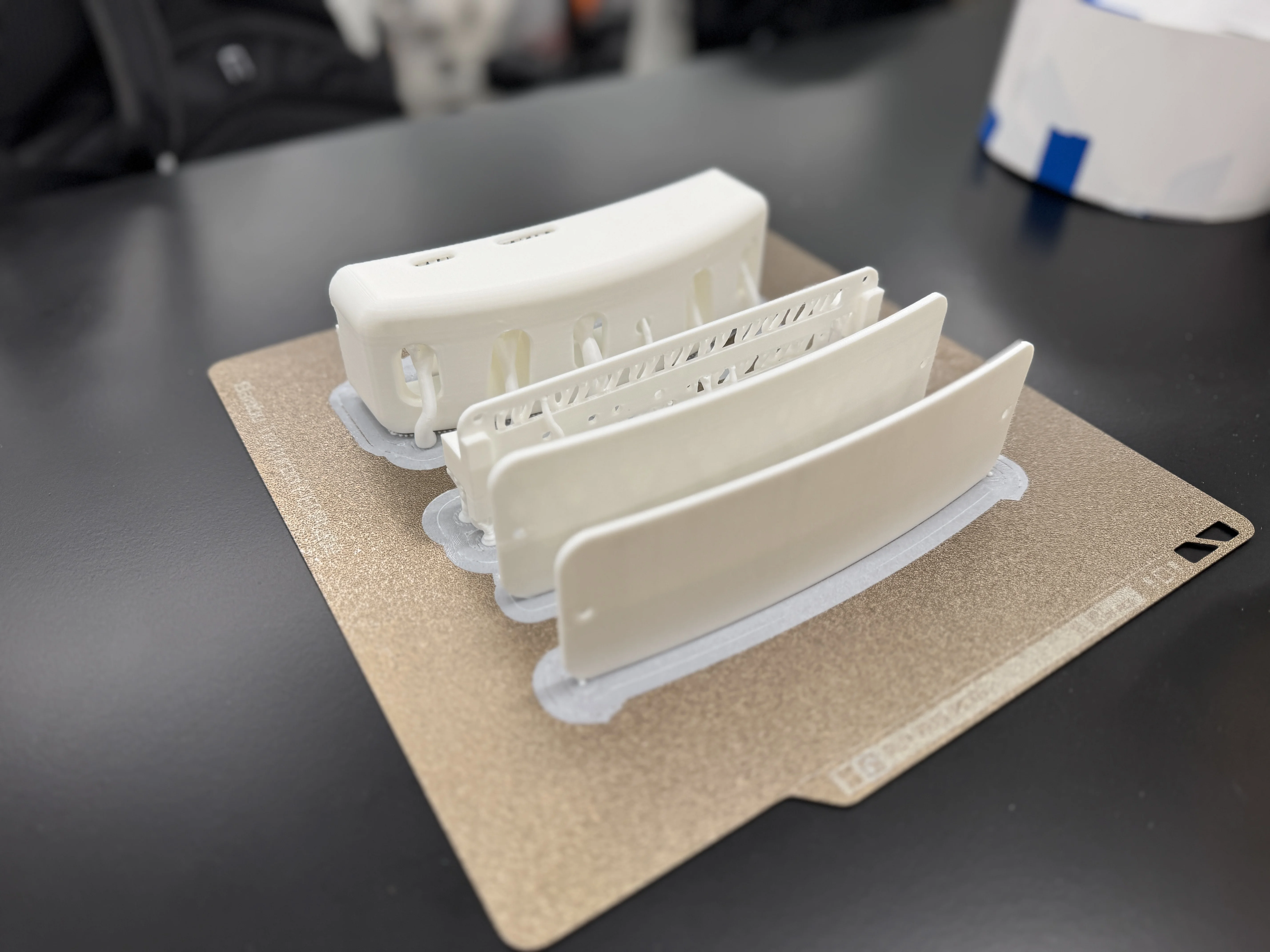

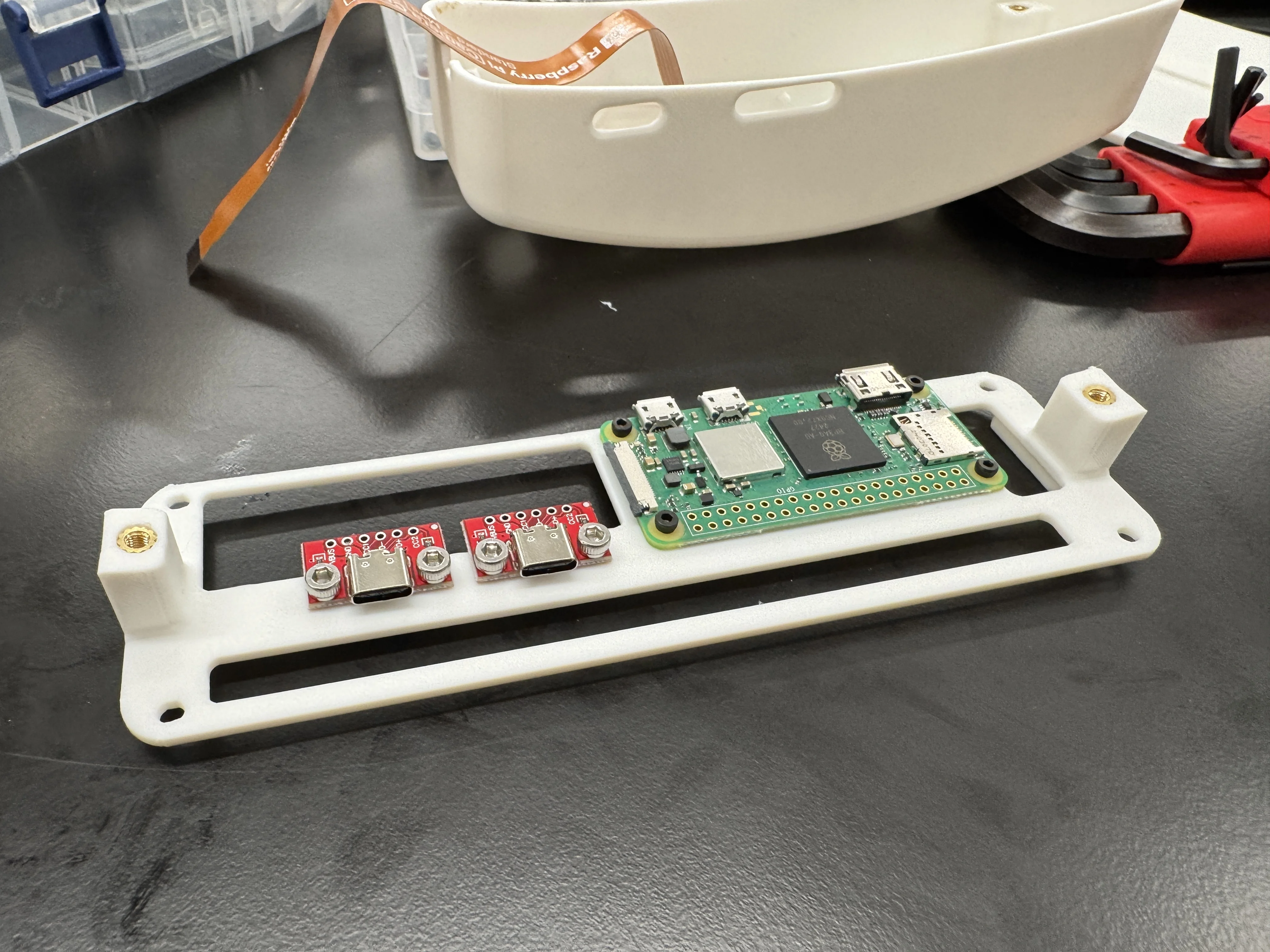

Similar to last year, I led Echo’s hardware and electronics, but this time, I focused on pushing the design as close to a consumer-level product as possible. The device was 3D-printed on a Stratasys Polyjet and ran off a 10,000mAh battery—stored in the user’s pocket (Apple Vision Pro style)—capable of providing multi-day battery life. At Echo’s core, it utilized a Raspberry Pi Zero 2W and five TF-Luna LiDAR sensors with a range of up to 8m. The Raspberry Pi AI camera harnessed the Sony IMX500 imaging sensor in tandem with the MobileNet SSD neural network to perform object detection.

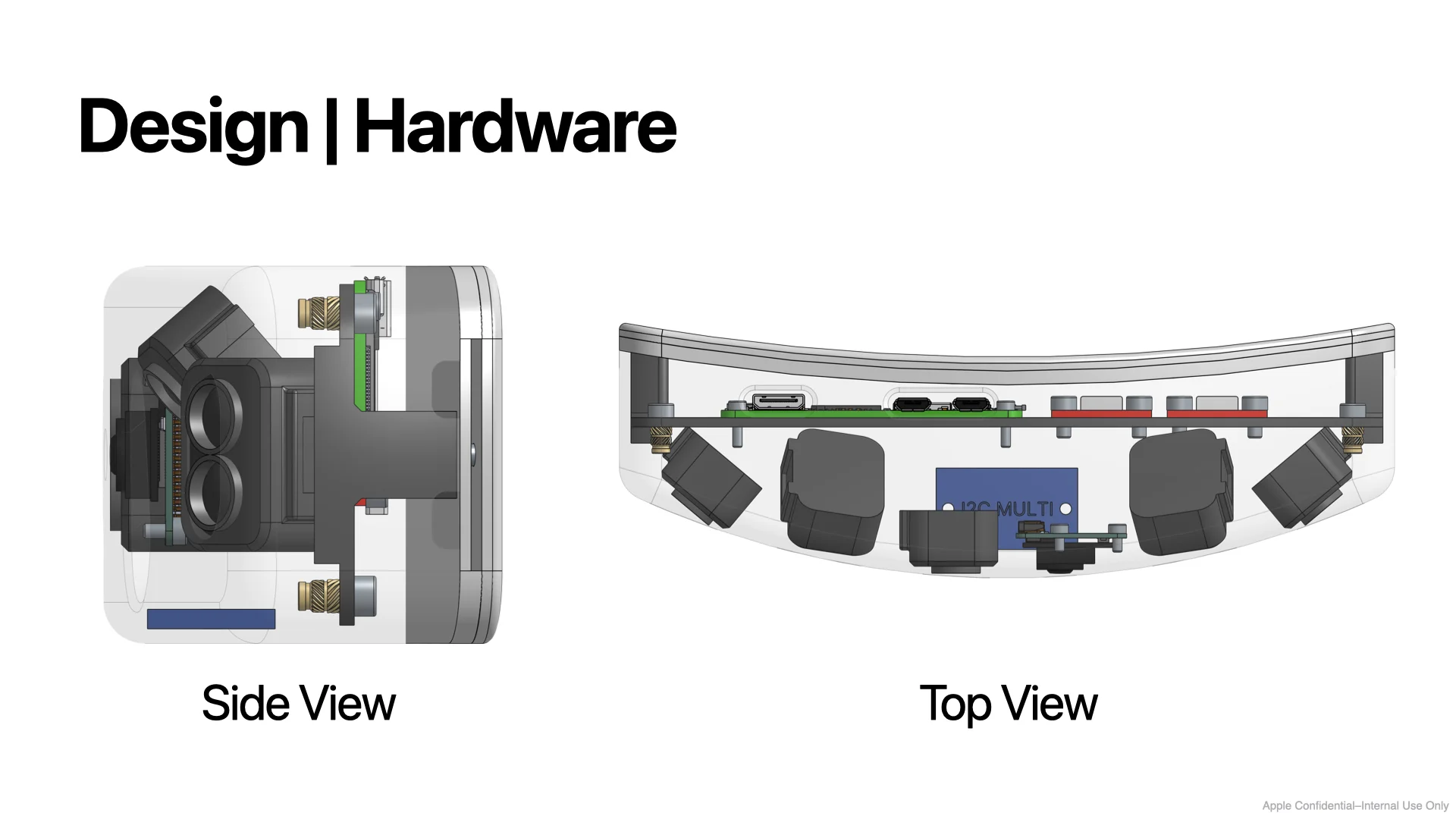

Hardware

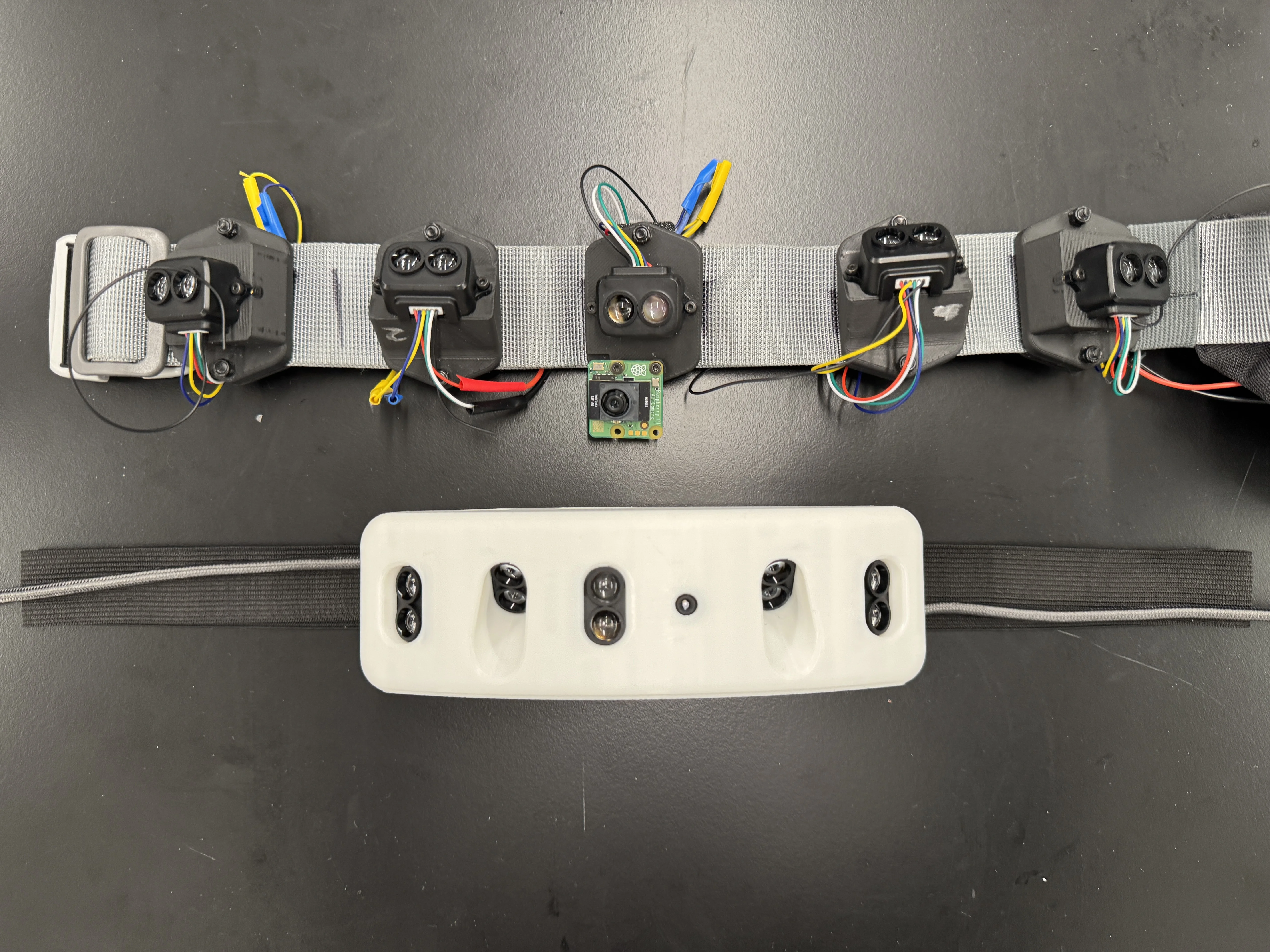

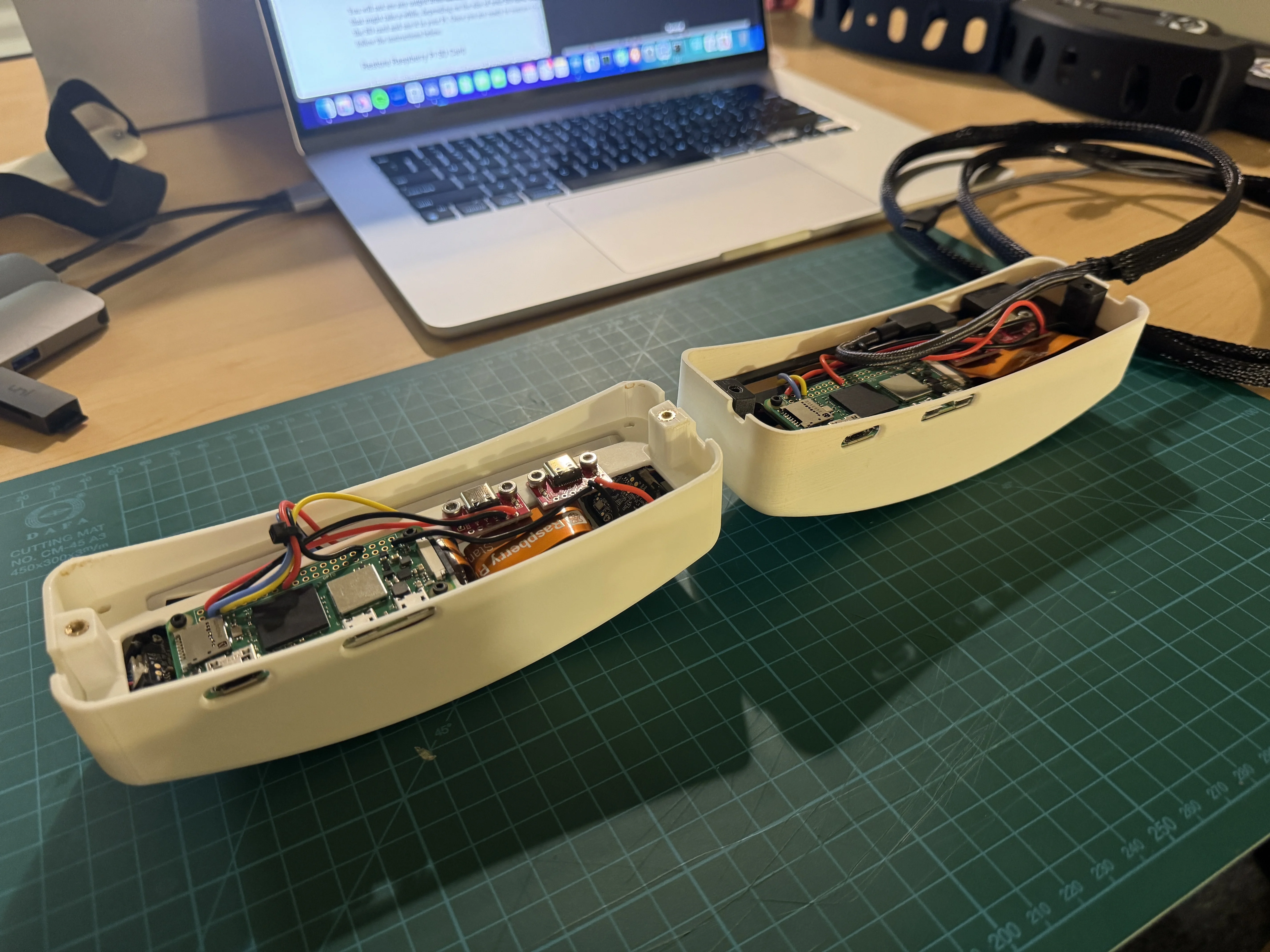

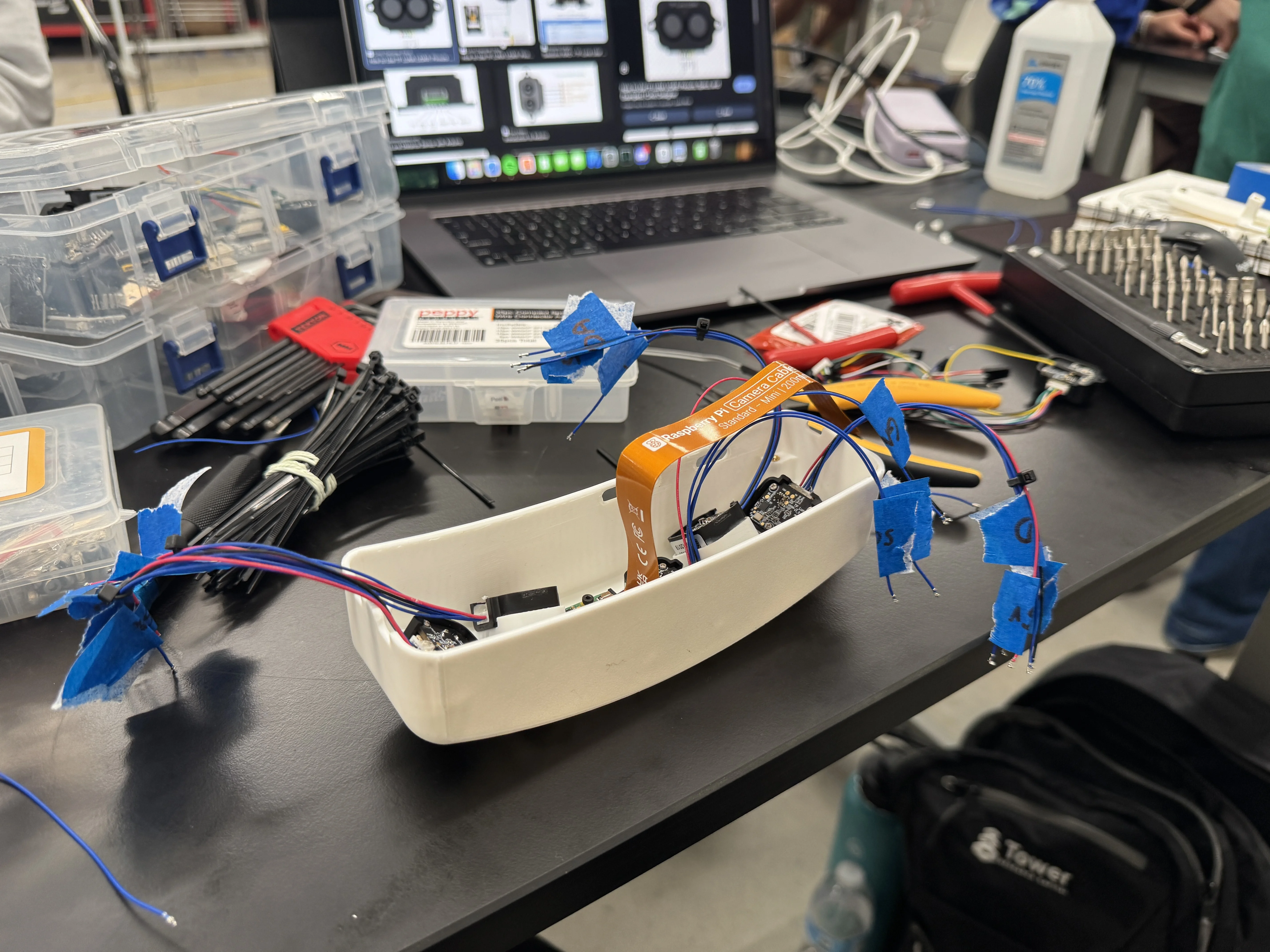

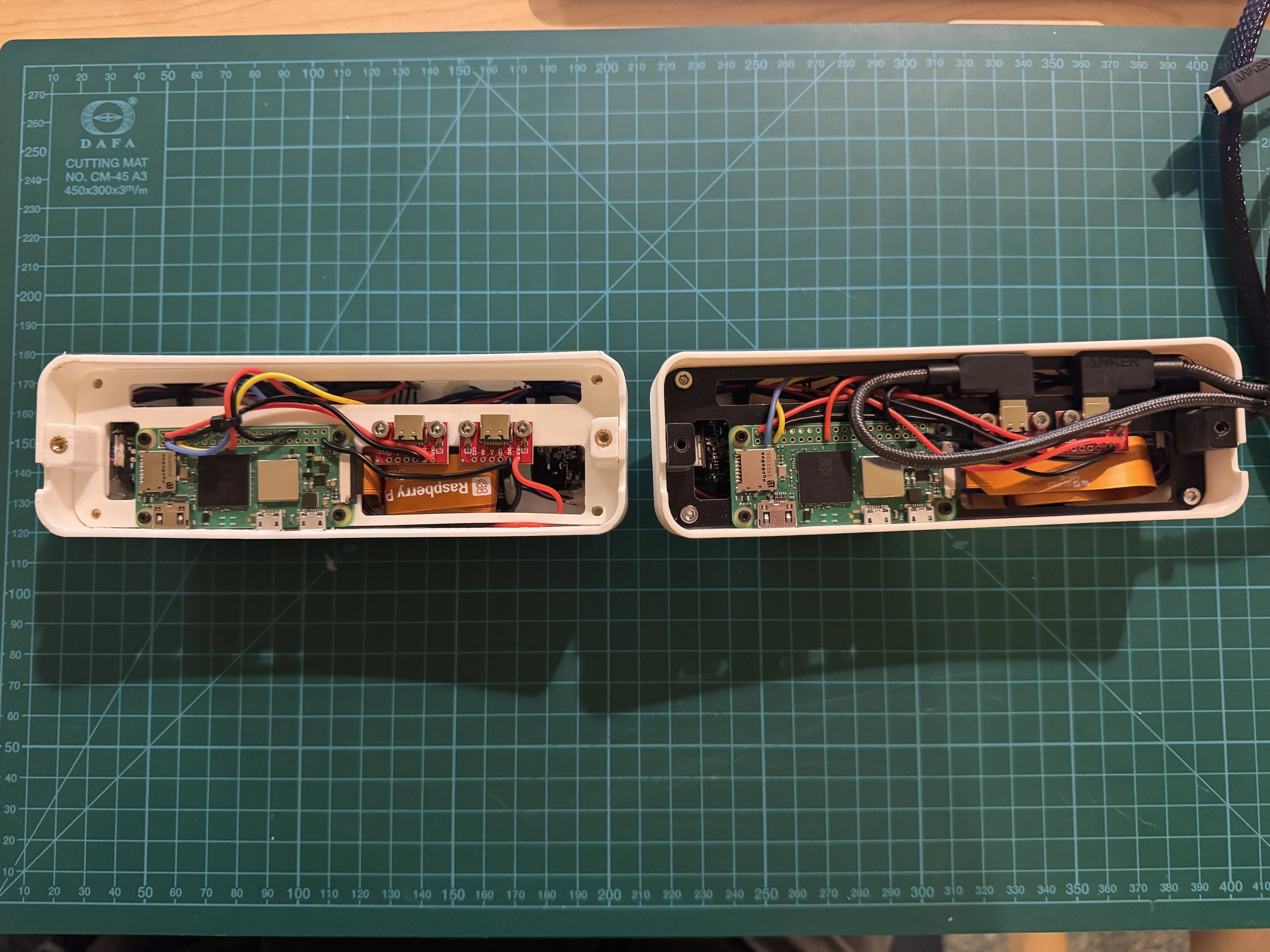

Echo had two major versions. The first was intended to test the overall concept and serve as a testing bench while the final product was worked on in parallel; however, this strategy didn’t work as planned. I ultimately built three complete Echo devices: one version 1 prototype and two version 2s (one serving as a backup for the live demo).

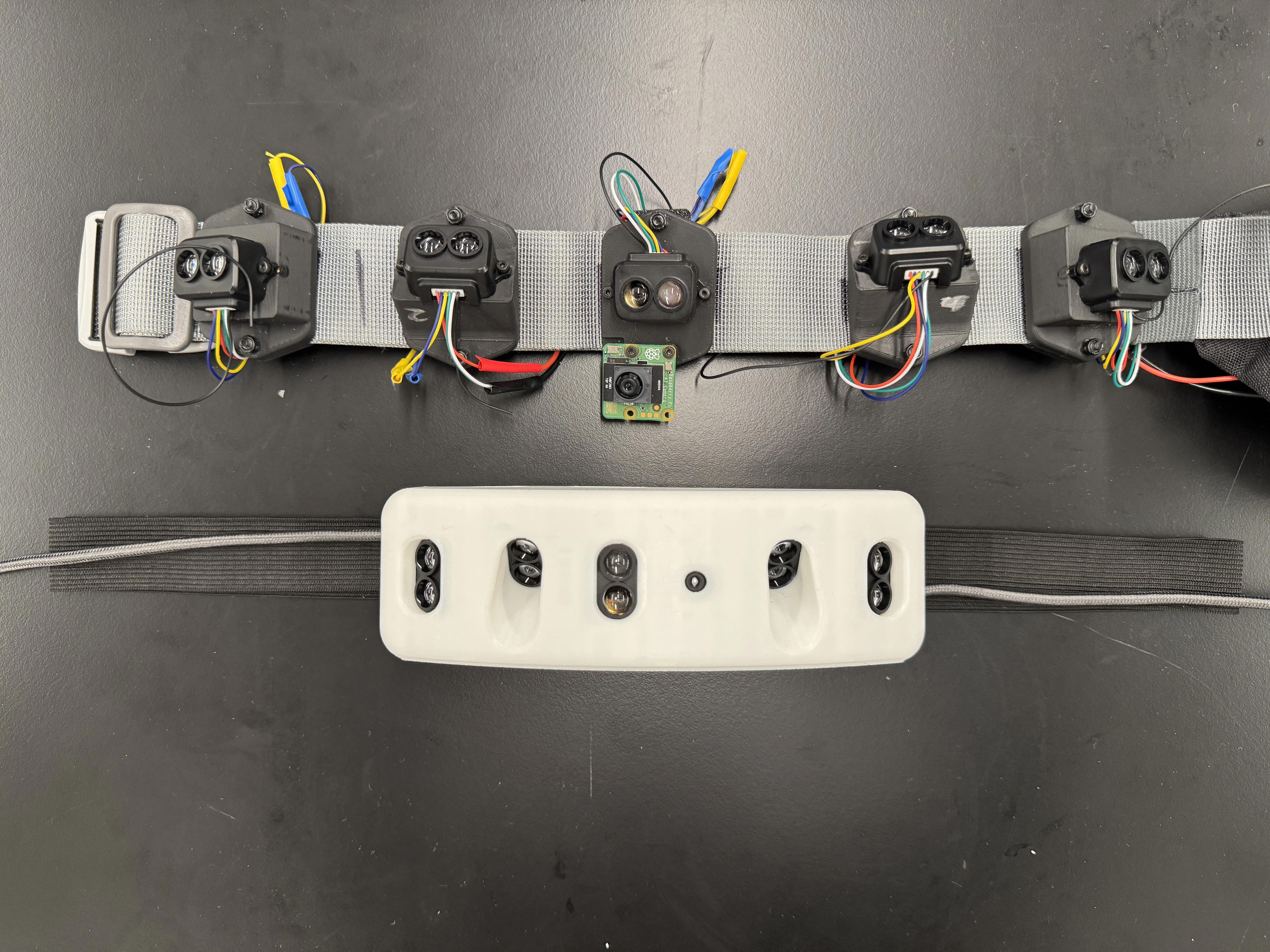

Version 1 (top) vs. Version 2 (bottom)

Echo’s key functionality lies in its five LiDAR sensors, which are strategically angled to maximize the horizontal field of view (~180°)—approximating a human’s—as well as the vertical FOV. In addition, a Raspberry Pi AI Camera performs onboard object detection, taking the processing load off the main Raspberry Pi Zero 2W.

Echo has two main parts: the main housing and the embedded haptic belt. Within the main housing is an internal “sled” where all the electronics are mounted. The LiDAR sensors are embedded directly into the housing, and an outer cover screws into heat-set inserts to close the assembly.

Echo provides on-device feedback through haptic motors embedded in its silicone 3D-printed haptic belt, which are controlled via digital I/O pins on the Raspberry Pi Zero 2W. The haptic system uses two forms of communication with a set priority: first, it uses different patterns to communicate obstacle type. Second, and with higher priority, it provides a clear distance sensation by firing specific haptics at varying intensities.

Haptic Belt

Electrical

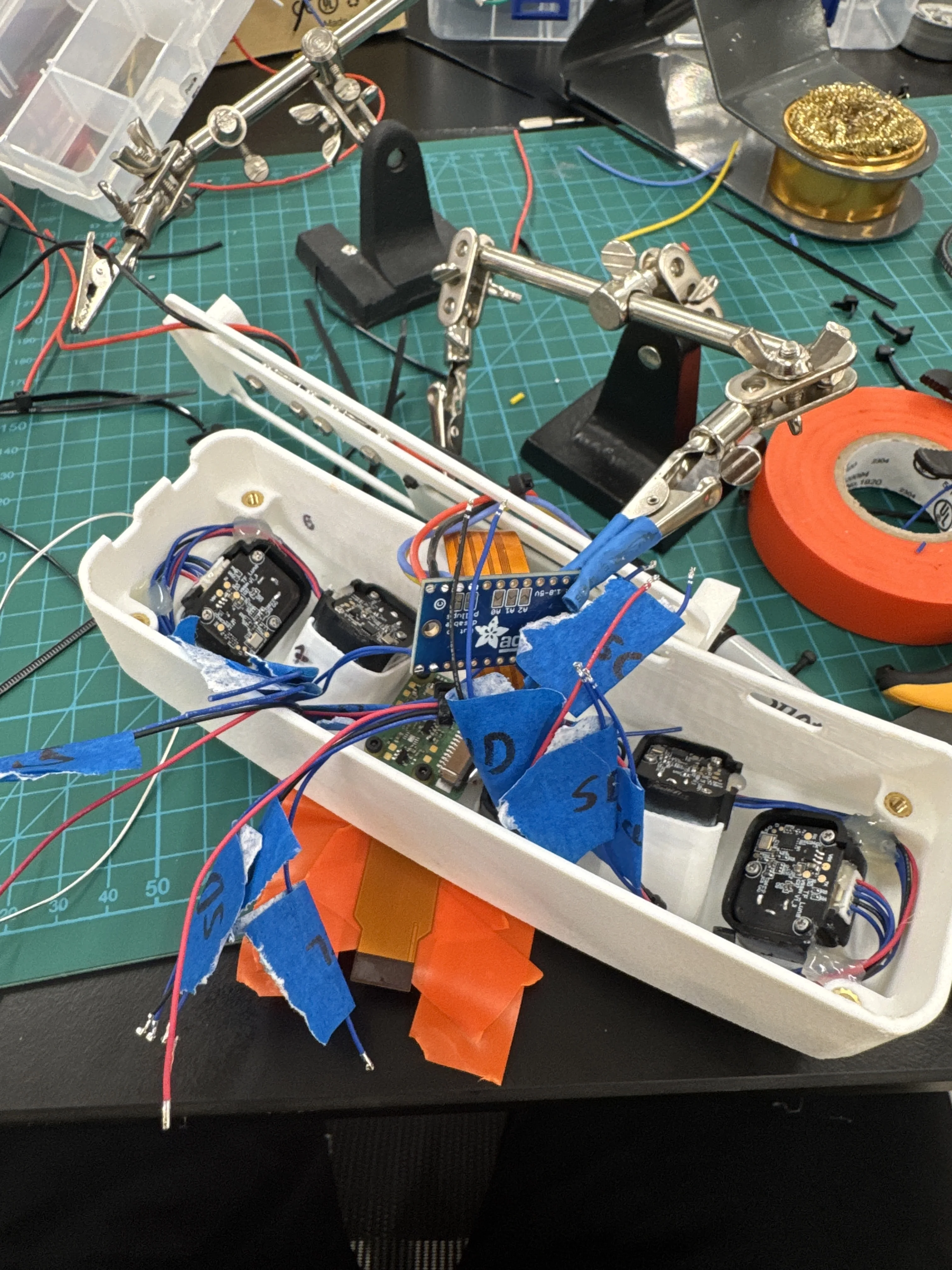

V1 wiring

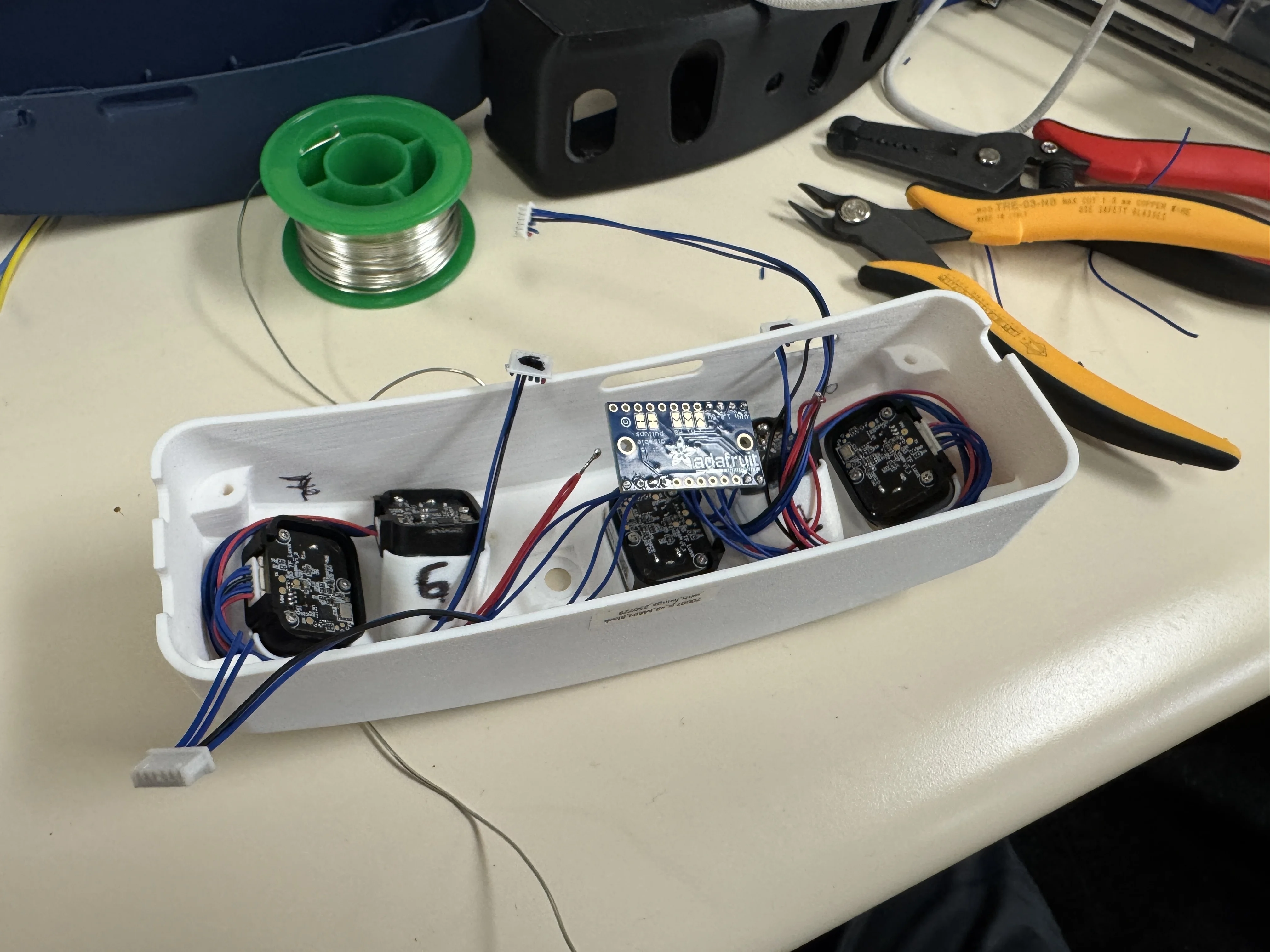

V2 wiring

Wiring Echo and its electronics sled was an interesting challenge. An important discovery while working on V1 related to the I2C bus. My plan was to route all five LiDAR sensors to the Raspberry Pi Zero 2W’s single I2C bus using Wago connectors and change their addresses in software. However, this proved unworkable. Wagos are not ideal for high-fidelity I2C communication, and the bus required dedicated pull-up resistors and a logic level converter. This was a key learning I carried over to V2, where I solved the issue by adding an I2C multiplexer, which has all this functionality integrated.

Power was provided via two USB-C breakout boards from the battery bank. One powered the LiDAR array, and the other powered the Raspberry Pi Zero 2W. This was to ensure the sensor’s power draw wouldn’t be too much for the Pi’s internal regulators.

Gallery

Check out Echo’s CAD here!